Business Intelligence :

Business Intelligence is a Combination of technologies like

• Data Warehousing (DW)

• On-Line Analytical Processing (OLAP)

• Data Mining (DM)

• Data Visualization (VIS)

• Decision Analysis (what-if)

• Customer Relationship Management (CRM)

Operational Data: (Transactional data)

Operational Data is exactly what it sounds like – data that is produced by your organization’s day to day operations

• Presents a dynamic view of the business

• Must be kept up-to-date and current at all times

• Updated by transactions entered by data-entry operators or specially trained end users

• Is maintained in detail

• Utilization is predictable. Systems can be optimized for projected workloads

• High volume of transactions, each of which affects a small portion of the data

Analytical Data:

Analytical Data is used to make business decisions

• End-user access is usually read-only

• More concerned with summary information

• Usage is unpredictable in terms of depth of information needed by the user

• Smaller number of queries, each of which may access large amounts of data

• Users need to understand the structure of the data (and business rules) to draw meaningful conclusions from the data

• Subject – orientation

Database:

Broadly classified into

• OLTP (Online Transactional Processing) DB

• OLAP (Online Analytical Processing) DB

OLAP vs OLTP

| OLTP – sources | OLAP – targets |

|---|---|

| Transaction Oriented | Decision Oriented (Reports) |

| Complex data model (fully normalised) | Simple data model (multidimensional/de-normalised) |

| Smaller data volume (few historical data) | Larger data volumes (collection of historical data) |

| Many, ”small” queries | Fewer, but ”bigger” queries |

| Frequent updates | Frequent reads, in-frequent updates (daily) |

| Huge no. of users(clerks). | Only few users(Management Personnel) |

Objective of Data Warehouse

The primary purpose of a data warehouse is to provide easy access to specially prepared data that can be used with decision support applications, such as management reporting, queries, decision support systems, and executive information systems.

Why Datawarehouse?

1. Ensure consistency: Standardizing data from different sources also reduces the risk of error in interpretation and improves overall accuracy.

2. Make better business decisions: Data warehousing improves the speed and efficiency of accessing different data sets and makes it easier for corporate decision-makers to derive insights that will guide the business and marketing strategies that set them apart from their competitors.

3. Improve their bottom line: Data warehousing improves the speed and efficiency of accessing different data sets and makes it easier for corporate decision-makers to derive insights that will guide the business and marketing strategies that set them apart from their competitors.

Data warehouse

• A decision support database that is maintained separately from the organization’s operational databases

• A Data Warehouse is an enterprise-wise collection of

• Subject oriented

• Integrated

• Time variant

• Non-volatile

1. Subject Oriented – Data warehouses focus on high-level business entities like sales, marketing, etc.

2. Integrated – Data in the warehouse is obtained from multiple sources and kept in a Consistent format.

3. Time-Varying – Every data component in the date warehouse associates itself with some point of time like weekly, monthly, quarterly and yearly.

4. Non-volatile – DWH stores historical data. Data does not change once it gets into the warehouse. Only load/refresh. Data from the operational systems are’

• Extracted

• Cleansed

• Transformed

- case conversion,

- data trimming,

- concatenation,

- datatype conversion

• Aggregated

• Loaded into DW

Use of DWH:

• Ad-hoc analyses and reports

• Data mining: identification of trends

• Management Information Systems

Designing a database for a Data Warehouse

1. Define User requirements, considering different views of users from different departments.

2. Identify data integrity, synchronization and security issues/bottlenecks.

3. Identify technology, performance, availability & utilization requirements.

4. Review normalized view of relational data to identify entities.

5. Identify dimensions.

6. Create and organize hierarchies of dimensions.

7. Identify attributes of dimensions.

8. Identify fact table(s).

9. Create data repository (metadata).

10. Add calculations.

Datamart

• Datamart is a subset of data warehouse and it is designed for a particular line of business, such as sales, marketing, or finance.

• In a dependent data mart, data can be derived from an enterprise-wide data warehouse.

• In an independent data mart, data can be collected directly from sources

• Datamart is the data warehouse you really use

• Why Datamart?

- Datawarehouse projects are very expensive and time taking.

- Success rate of DWH projects is very less

To avoid single point of loss we identify department wise needs and build Datamart. If succeeded we go for other departments and integrate all datamarts into a Datawarehouse.

• Advantages

◾ Improve data access performance

◾ Simplify end-user data structures

◾ Facilitate ad hoc reporting

| Data warehouse | Data mart |

|---|---|

| DW Operates on an enterprise level and contains all data used for reporting and analysis | Data Mart is used by a specific business department and is focused on a specific subject (business area).

DM is a subset of DWH |

A data-warehouse is a heterogeneous collection of different data sources organized under a unified schema. There are 2 approaches for constructing data-warehouse: Top-down approach and Bottom-up approach are explained as below.

- Top-down approach:

Advantages of Top-Down Approach:

• Since the data marts are created from the Datawarehouse, provides consistent dimensional view of data marts.

• Also, this model is considered as the strongest model for business changes. That’s why, big organizations prefer to follow this approach.

• Creating data mart from Datawarehouse is easy.

Disadvantages of Top-Down Approach:

The cost, time taken in designing and its maintenance is very high. - Bottom-up approach:

• First, the data is extracted from external sources (same as happens in top-down approach).

• Then, the data go through the staging area (as explained above) and loaded into data marts instead of Datawarehouse. The data marts are created first and provide reporting capability. It addresses a single business area.

• These data marts are then integrated into Datawarehouse. This approach is given by Kinball as – data marts are created first and provides a thin view for analyses and Datawarehouse is created after complete data marts have been created.Advantages of Bottom-Up Approach:

• As the data marts are created first, so the reports are quickly generated.

• We can accommodate a greater number of data marts here and in this way Datawarehouse can be extended.

• Also, the cost and time taken in designing this model is low comparatively.

Disadvantage of Bottom-Up Approach:

This model is not strong as top-down approach as dimensional view of data marts is not consistent as it is in above approach.

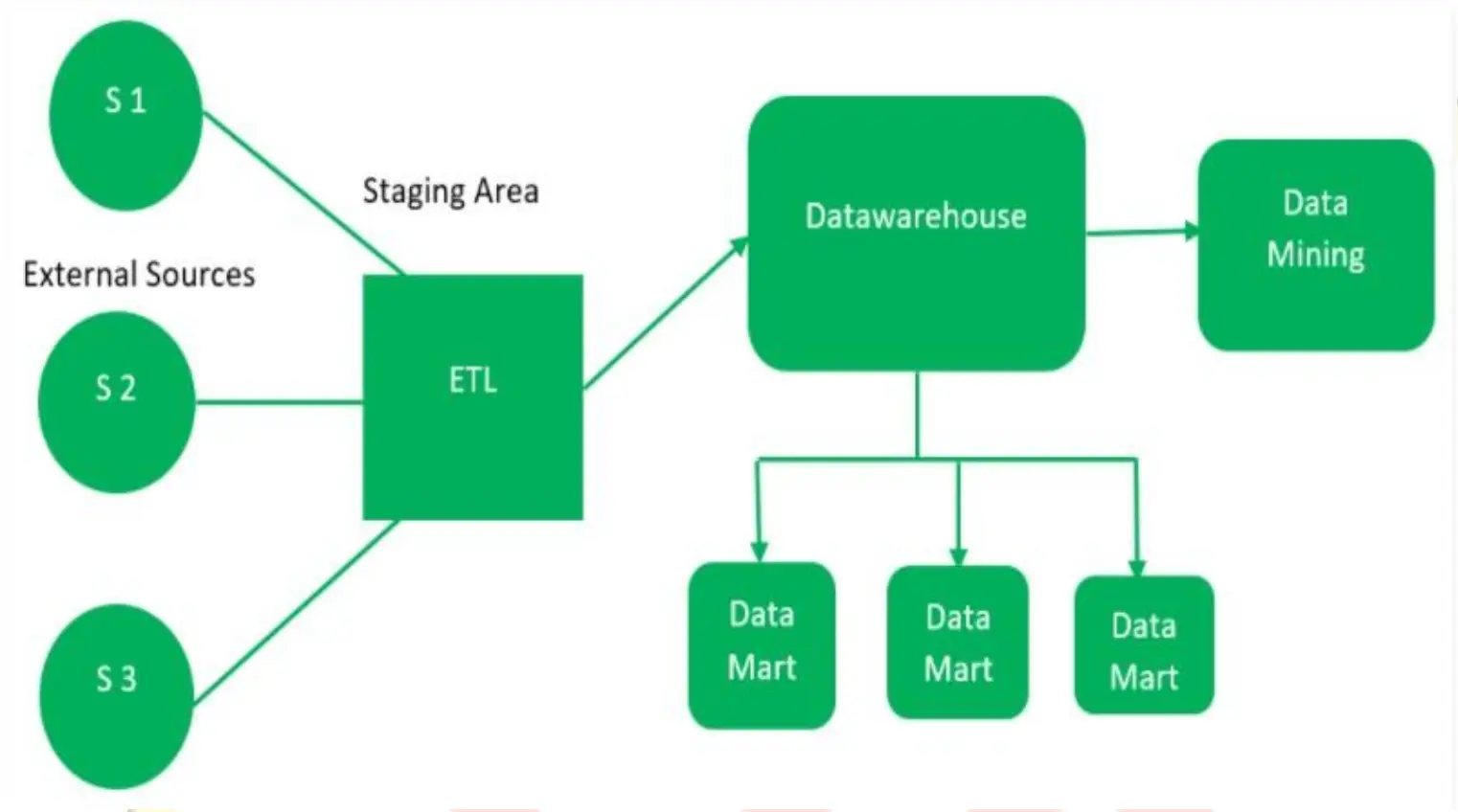

Datawarehouse architecture

Data warehouse architecture is a way of representing the overall structure of data, communication, processing and presentation that is planned, for end-user computing within the enterprise. The architecture has the following main parts:

ETL

1. ETL means Extraction, transformation, and loading.

2. ETL refers to the methods involved in accessing and manipulating source data and loading it into target database.

ETL Process:

Etl is a process that involves the following tasks:

• Extracting data from source operational or archive systems which are the primary source of data for the data warehouse

• Transforming the data – which may involve cleaning, filtering, validating and applying business rules

• Loading the data into a data warehouse or any other database or application that houses data

Transform:

1. Denormalize data

2. Data cleaning.

3. Case conversion

4. Data trimming

5. String concatenation

6. datatype conversion

7. Decoding

8. calculation

9. Data correction.

Cleansing:

The process of resolving inconsistencies and fixing the anomalies in source data, typically as part of the ETL process.

Data Staging Area:

1. Most complex part in the architecture.

2. A place where data is processed before entering the warehouse

3. It involves…

• Extraction (E)

• Transformation (T)

• Load (L)

• Indexing

Popular ETL Tools

| Tool Name | Company Name |

|---|---|

| Informatica | Informatica Corporation |

| DT/Studio | Embarcadero Technologies |

| DataStage | IBM |

| Ab Initio | Ab Initio Software Corporation |

| Data Junction | Pervasive Softwar |

| Oracle Warehouse Builder | Oracle Corporation |

| Microsoft SQL Server Integration | Microsoft Inc. |

| TransformOnDemand | Solonde |

| Transformation Manager | ETL Solutions |

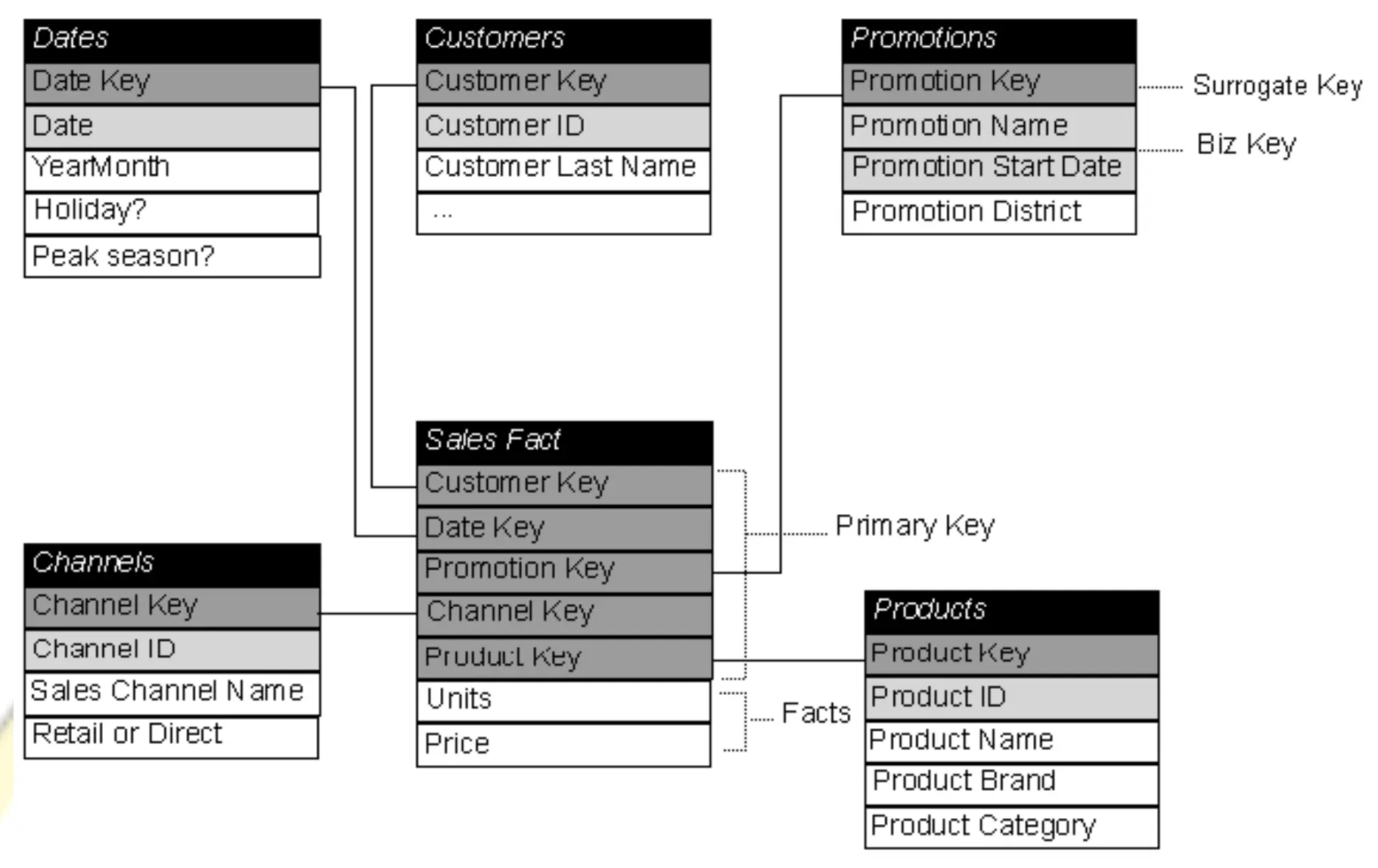

Dimensional Modeling

• Means storing data in fact and dimension tables.

• Here data is fully denormalized.

Dimension table

1. Dimension table gives the descriptive(STRING) attributes(COLUMNS) of a business.

2. They are fully denormalized

3. It has a primary key

4. Data arranged in hierarchical manner (product to category; month to year) – if so we can use for drill down and drill up analysis

5. Has less no. of records

6. Has rich no. of columns

7. Heavily indexed

8. Dimension tables are sometimes called lookup or reference tables.

Types of Dimensions

1. Normal Dimension

2. Confirmed Dimension

3. Junk Dimension

4. Degenerated Dimension

5. Role Playing Dimension

• Normal Dimension:

Dimension table contains the data about the business. The primary keys of the dimension tables are used in Fact tables with foreign key relationship. And the remaining columns in the dimension is normal data which is the information about the Objects related to the business.

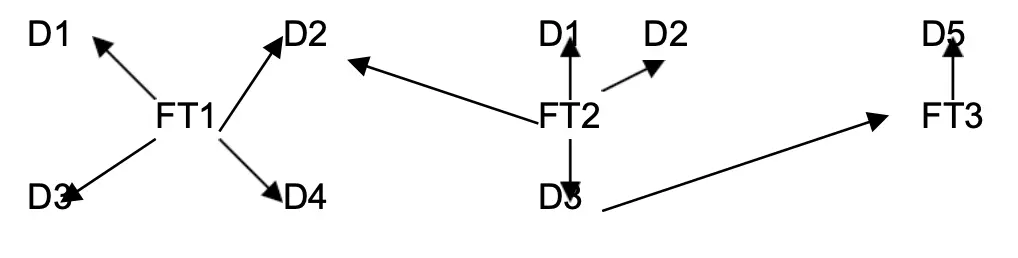

• Confirmed Dimension:

Dimension table used by more than one fact table is called Confirmed Dimensions (dimensions that are linked to multiple fact tables)

Adv:

1. To avoid unnecessary space

2. Reduce time

3. Drill across fact table

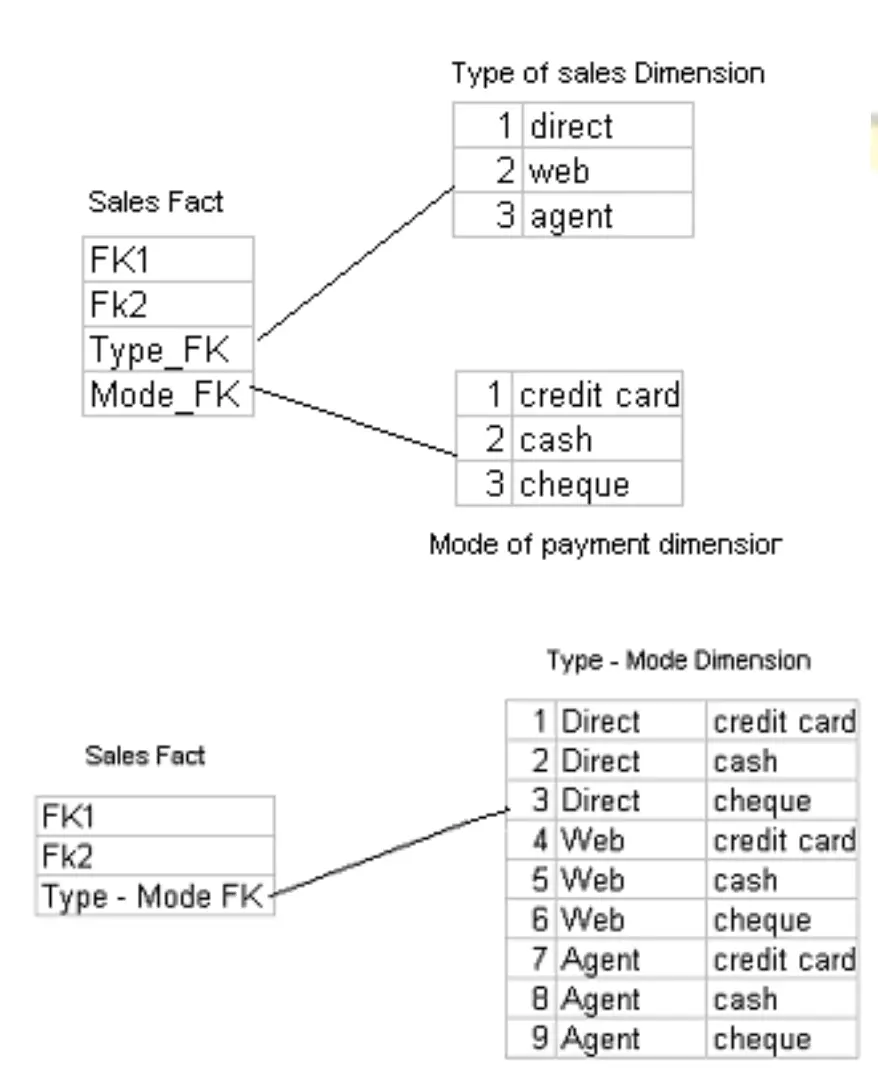

• Junk Dimension:

1. It is an abstract dimension it will remove number of foreign keys from fact table.

2. This is achieved by combining 2 or more dimensions into a single dimension.

• Degenerated Dimension:

Means a key value or dimension table which does not have descriptive attributes. i.e.) a non-foreign key and non-numerical measure column used for grouping purpose.

Ex: Invoice Number, Ticket Number

• Role Playing Dimension:

Means a single physical dimension table plays different role with the help of views.

Fact Table

1. The centralized table in a star schema is called as FACT table

2. A fact table typically has two types of columns:

• Numerical measures and

• Foreign keys to dimension tables.

3. The primary key of a fact table is usually a composite key that is made up of all of its foreign keys

4. Fact tables store different types of measures like

• additive,

• non additive and

• semi additive measures

5. A fact table might contain either detail level facts or facts that have been aggregated

6. A fact table usually contains facts with the same level of aggregation.

7. Has millions of records

Measure Types

• Additive – Measures that can be summarized across all dimensions.

Ex: sales

• Non-Additive – Measures that cannot be summarized across all dimensions.

Ex: averages

• Semi Additive – Measures that can be summarized across few dimensions and not with others.

Ex: inventory levels

Factless Fact

A fact table that contains no measures or facts is called as Factless Fact table.

Slowly Changing Dimensions

1. Dimensions that change over time are called Slowly Changing Dimensions.

2. Slowly Changing Dimensions (SCD) are often categorized into three types namely

SCD Type1, SCD Type2 and SCD Type3

• SCD Type1:

1. Used if history is not required

2. Overwriting the old values.

Product Price in 2004:

Product Price in 2005:

• SCD Type2:

1. If history and current value needed

2. Creating another additional record.(new record with new changes and new surrogate key)

3. Mostly preferred in dimensional modeling

Product

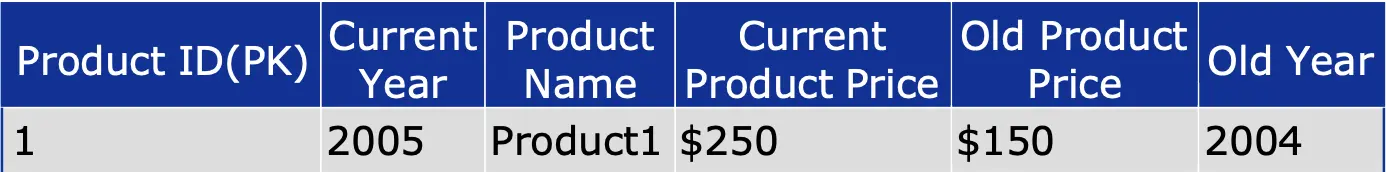

• SCD Type3:

1. Used if changes are very less

2. Previous one level of history available

3. Creating new fields.

Product Price in 2005

Surrogate keys

• Surrogate keys are always numeric and unique on a table level which makes it easy to distinguish and track values changed over time.

• Surrogate keys are integers that are assigned sequentially as needed to populate a dimension.

• Surrogate keys merely serve to join dimensional tables to the fact table.

Surrogate keys are beneficial as the following reasons:

1. Faster retrieval of data (since alphanumerical retrieval is costlier than numerical data)

2. Maintaining index is easier with numeric key.

3. Maintain all slowly changing dimension.

Data warehouse Design

The data warehouse design essentially consists of four steps, which are as follows:

1. Identifying facts and dimensions

2. Designing fact tables

3. Designing dimension tables

4. Designing database schemas

Types of database schemas

There are three main types of database schemas:

1. Star Schema

2. Snowflake Schema and

3. Starflake schema (Hybrid)

• Star Schema

1. It is the simplest form of data warehouse schema that contains one or more dimensions and fact tables

2. It is called a star schema because the entity-relationship diagram between dimensions and fact tables resembles a star where one fact table is connected to multiple dimensions

3. The center of the star schema consists of a large fact table and it points towards the dimension tables

4. Fact Table = Highly Normalized

Dimension Table = Highly

denormalized.

Advantages:

• Star schema is easy to define.

• It reduces the number of physical joins.

• Provides very simple metadata.

Drawbacks:

• Summary data in Fact tables (such as Sales amount by region, or district-wise, or year-wise) yields poor performance for summary levels and huge dimension tables.

Steps in designing Star Schema

1. Identify a business process for analysis (like sales).

2. Identify measures or facts (sales dollar).

3. Identify dimensions for facts (product dimension, location dimension, time dimension, organization dimension).

4. List the columns that describe each dimension. (Region name, branch name, employee name).

5. Determine the lowest level of summary in a fact table (sales dollar).

• Snowflake schema

1. A snowflake schema is a term that describes a star schema structure normalized through the use of outrigger tables. i.e dimension table hierarchies are broken into simpler tables

2. Represent dimensional hierarchy directly by normalizing the dimension tables ie) all dimensional information is stored in third normal form

3. This implies dividing the dimension tables into more tables, thus avoiding non-key attributes to be dependent on each other.

Advantages:

Snowflake schema provides best performance when queries involve aggregation.

Disadvantages:

• Maintenance is complicated.

• Increase in the number of tables.

• More joins will be needed.

Star Schema vs Snowflake Schema

| Star Schema | Snow Flake |

|---|---|

| Dimension table will not have any parent table | Dimension table will have one or more parent tables |

| Hierarchies for the dimensions are stored in the dimensional table itself | Hierarchies are broken into separate tables in snow flake schema |

| Less joins will be needed | More joins will be needed |

Granularity

• Means what detail data to be stored in fact table

• Types of Granularity

1. Transactional Level Granularity

2. Periodic Snapshot Granularity

Transactional Level Granularity

• Mostly used

• Each and every transaction stored in fact table

• Drill down and drill up analysis can be done

• Disadvantage: Size increases.

Periodic Snapshot Granularity

• Summarizing data over a period is stored in fact table

• Advantage: Faster retrieval (less records)

• Disadvantage: Detail information not available

What Is Metadata?

Metadata is information about other data that is contained inside a collection of data. Metadata summarizes essential facts about data, making it easy to search and deal with specific instances of data. Metadata can be generated both manually and automatically.

FAQ (Frequently Asked Questions)

Hierarchy

1. Hierarchies are logical structures that use ordered levels as a means of organizing data.

2. A hierarchy can be used to define data aggregation.

Example:

• country>city>state>zip

• in a time dimension, a hierarchy might be used to aggregate data from the Month level to the Quarter level, from the Quarter level to the Year level.

Level

A position in a hierarchy. For example, a time dimension might have a hierarchy that represents data at the Month, Quarter, and Year levels.

Operational Data Store

• In recent times, OLAP functionality is being built into OLTP systems which is called ODS (operational data store).

• A physical set of tables sitting between the operational systems and the data warehouse or a specially administered hot partition of the data warehouse itself.

• The main reason of ODS is to provide immediate reporting of operational results if neither the operational system nor the regular data warehouse can provide satisfactory accsee.

• Since an ODS is necessarily an extract of the operational data, it also may play the role of source for data warehouse.

Data Staging Area

1. A storage area that clean, transform, combine, duplicate and prepare source data for use in the data warehouse.

2. The data staging area is everything in between the source system and data presentation server.

3. No querying should be done in the data staging area because the data staging area normally is not set up to handle fine-grained security, indexing or aggregation for performance.

Data Warehouse Bus Matrix

1. The matrix helps prioritize which dimensions should be tackled first for conformity given their prominent roles.

2. The matrix allows us to communicate effectively within and across data mart teams.

3. The columns of the matrix represent the common dimensions.

4. The rows identify the organizations business processes.

Degenerated Dimension

Operational control numbers such as invoice numbers, order numbers and bill of lading numbers looks like dimension key in a fact table but do not join to any actual dimension table. They give rise to empty dimension hence we refer them as Degenerated Dimension (DD).

Operational Data Source (ODS):

• ODS stands for the operational data store, and it stores the most Recent data transfers from various operational sources and activities over data and monitoring.

• A central archive that provides a snapshot of the most recent data from multiple transactional processes for operational monitoring is known as an operational data store (ODS).

• It allows businesses to consolidate data in its original format from several sources into a single destination for market reporting.

What Are the Different Kinds of Dimensional Modeling?

Dimensional Modeling is classified into three groups, which are as follows:

• Conceptual Modeling

• Logical Modeling

• Physical Modeling

In Data Warehousing, What Is the Concept of a Cube?

Cubes are used to describe multidimensional data logically. The dimension members are located on the cube’s edge, and the data values are located on the cube’s body.

Leave a comment